This is one of the first demonstrator projects being released as part of my 1-click thesis strand of work in automating the production of substantial research reports. The projects, often interdisciplinary, will demonstrate how modern commercial LLMs can signficantly reduce the amount of human work needed to create large high-quality reports. Each project is led by a human author but with LLMs in a major supportive and interactive role.

For projects like this, outside the author’s core competencies (though not outside his core interests) no warranties can be given as to correctness of all details. LLMs are still prone to produce hallucinations even when checking processes are in place.

Summary for the hasty reader

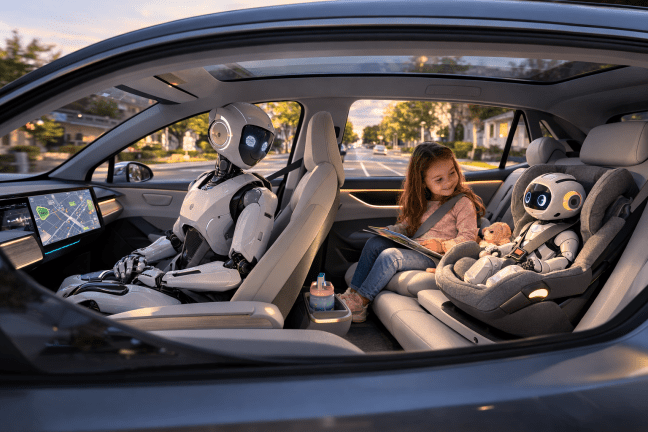

The next big bottleneck in AI may not be model size or compute. It may be access to grounded, real-world experience. If millions of embodied robots begin operating in homes, vehicles and care settings, they could generate filtered experience traces that improve LLM-plus systems far beyond what internet text alone can provide.

Child-size companion robots may be especially important because they open access to a domain that today’s AI models understand badly: children’s language-in-context and everyday micro-social interaction.

But this only works if the architecture is privacy-first: central systems should receive distilled updates, not intimate raw detail from children’s lives, except under tightly governed emergency rules. [1][2][3]

A small number of key references are included in this posting. A full set is also available.

Note that the report here is going well beyond the state of the art, as is typical in such innovative projects, but we believe that many of the pieces of supporting evidence are justified in the literature.

A different way to think about Robot World

Most discussion of robots still starts with labour substitution: which jobs they might do, how much they might cost, and whether they will be humanoid, wheeled or something in between. That is important, but it misses a second and potentially more strategic effect.

A large installed base of robots would not just consume intelligence created elsewhere. It could also become a data engine for better AI.

That matters because disembodied AI has a weakness that fluent language processing can hide. Large language models are very good at talking about the world. They are much less reliable when they need a robust feel for how the world behaves: what is fragile, what is heavy, what a nervous child sounds like, what clutter does to navigation, what happens when an object slips, or how social routines vary through a day. Embodied systems can experience those things directly. [4][5]

Why robot fleets matter

A single household robot would learn useful lessons. A fleet of millions would do something more important: it would generate repeated, comparable and filterable experience across many settings. Instead of relying only on web text, future AI systems could learn from action–outcome pairs, interruption patterns, recovery from mistakes, object affordances, timing, spatial routines and social repair.

The key point is that raw sensor streams are not the prize. The prize is compressed experience. In other words, the value lies in extracting structured lessons from real life rather than vacuuming up endless video. A household robot does not need to send central systems every second of domestic life. It needs to return abstractions such as: this kind of cup is easy to tip; this floor becomes slippery when wet; children of this age often use this phrase to mean something else; this sort of transition tends to trigger frustration; this gesture predicts a request for help. [5][6]

The winning architecture is not surveillance

This is why the strongest architecture is likely to be:

on-device compression → privacy and safety filtering → fleet aggregation → distillation into shared models → redeployment.

That is a very different proposition from centralising raw footage or continuous home audio.

Technically, that points towards a hybrid LLM-plus stack:

- language models for explanation and planning

- multimodal perception systems for local sensing

- embodied control models for action

- memory systems for episodic experience, and

- aggregation layers that learn from fleets without indiscriminate data transfer.

Some of the enabling logic already exists in adjacent work on embodied AI, multimodal models, federated learning and robot operating systems. The child-robot case simply makes the privacy requirement much harder and much more important. [1][4][7]

Why child-size robots may matter more than expected

The most original part of this argument concerns child-size companion robots. There is already a large literature on social robots in education, but much of it frames robots as tutors, classroom assistants or quasi-teachers. That in our view is not the most persuasive route.

A more realistic and socially acceptable route may be the companion model: not a robotic pedagogue, and certainly not a substitute parent, but something closer to an intelligent teddy or play companion with tightly bounded capabilities.

I wish my teddy could really play with me!

Why does that matter for AI development? Because children inhabit a domain that current models understand only weakly. Child language is often fragmentary, playful, gesture-heavy, emotionally charged and deeply contextual. Many meanings never appear clearly in cleaned text corpora.

A child-size robot embedded in ordinary routines could encounter transition anxiety, invented vocabulary, comfort rituals, toy ecologies, misunderstandings, sudden topic shifts and the micro-social negotiations of everyday childhood.

That kind of data is rare, and it is exactly the sort of thing text-trained systems miss. [2][3][8]

But only under strict social limits

This is also where the strongest caution is needed. The fact that robots could learn from children does not mean they should become little teachers, secret-keepers or emotional monopolists. The proposed social contract has to be stricter than that.

A child-size robot should have very limited physical strength, conservative movement speeds, obvious adult override, a visible kill-switch, and clear rules against secrecy, coercion or manipulative emotional dependency. It should listen to children, but remain bounded by adult-set permissions and safety policy.

Most importantly, it should not function as a domestic informant. Central systems should receive distilled learning signals, not narrative disclosure about family life. Only narrowly defined emergency situations should justify escalation beyond that. Current policy and consumer-safety work on AI companions and smart toys strongly supports this caution. [1][2][3][8]

A child-size robot should have very limited physical strength, conservative movement speeds, obvious adult override, a visible kill-switch, and clear rules against secrecy, coercion or manipulative emotional dependency. It should listen to children, but remain bounded by adult-set permissions and safety policy.

Most importantly, it should not function as a domestic informant.

Central systems should receive distilled learning signals, not narrative disclosure about family life. Only narrowly defined emergency situations should justify escalation beyond that. Current policy and consumer-safety work on AI companions and smart toys strongly supports this caution. [1][2][3][8]

The economic point that may be missed

Seen this way, Robot World creates two value streams.

- The first is the obvious one: practical services, assistance and productivity.

- The second is subtler but potentially immense: the generation of grounded learning data for future AI. If large fleets of robots operate in homes, vehicles and care settings, then deployment itself becomes part of the improvement loop.

That helps explain why embodied AI may attract such intense investment even before the hardware is perfect. The eventual prize is not just the sale of robots. It is the creation of a feedback system in which embodied experience improves central models, and better models improve the next generation of robots.

In that sense, Robot World is not just a market for devices. It may become an LLM training economy. [6][7]

A final practical conclusion

The most important message is simple. The route to better AI may not lie only in more internet text, more synthetic data or larger clusters. It may lie in building systems that can move through the world, learn from consequences, and return only the right abstractions to the wider model ecosystem.

If that is correct, then child-size companion robots deserve attention for reasons that go well beyond novelty. They may become one of the few ways to open up a poorly modelled human domain while keeping the design socially bounded. But that opportunity will only be legitimate if privacy, governance and emotional restraint are designed in from the start.

The real challenge, then, is not whether robots can become smarter.

It is whether Robot World can be built in a way that turns embodied experience into shared intelligence without turning childhood into a data mine.

Selected references

[1] United Nations Children’s Fund (UNICEF) (2025) Guidance on AI and Children 3.0. Florence: UNICEF Innocenti. Available at: https://www.unicef.org/innocenti/media/11991/file/UNICEF-Innocenti-Guidance-on-AI-and-Children-3-2025.pdf

[2] Common Sense Media (2025) CSM AI Risk Assessment: Social AI Companions. San Francisco, CA: Common Sense Media. Available at: https://www.commonsensemedia.org/sites/default/files/pug/csm-ai-risk-assessment-social-ai-companions_final.pdf

[3] Brookings Institution (2025) Policy guardrails needed as babies around the world begin to interact with AI. Washington, DC: Brookings. Available at: https://www.brookings.edu/articles/policy-guardrails-needed-as-babies-around-the-world-begin-to-interact-with-ai/

[4] Asada, M., Hosoda, K., Kuniyoshi, Y., Ishiguro, H., Inui, T., Yoshikawa, Y., Ogino, M. and Yoshida, C. (2009) ‘Cognitive developmental robotics: A survey’, IEEE Transactions on Autonomous Mental Development, 1(1), pp. 12–34. Available at: https://www.cs.tufts.edu/comp/150DR/readings/week1/Asada09g.pdf

[5] Heinrich, S., Yao, Y., Hinz, T., Liu, Z., Hummel, T., Kerzel, M., Weber, C. and Wermter, S. (2020) ‘Crossmodal language grounding in an embodied neurocognitive model’, Frontiers in Neurorobotics, 14, article 52. Available at: https://www.frontiersin.org/journals/neurorobotics/articles/10.3389/fnbot.2020.00052/full

[6] McKinsey Global Institute (2025) Agents, Robots, and Us: Skill Partnerships in the Age of AI. New York, NY: McKinsey Global Institute. Available at: https://www.mckinsey.com/mgi/our-research/agents-robots-and-us-skill-partnerships-in-the-age-of-ai

[7] Arm Newsroom (2025) Is this the Android moment for mobile robots? Cambridge: Arm. Available at: https://newsroom.arm.com/blog/robot-operating-system-iot-r2c2

[8] Fosch-Villaronga, E. and Poulsen, A. (2023) ‘Toy story or children story? Putting children and their rights at the forefront of the artificial intelligence revolution’, AI & Society. Available at: https://link.springer.com/article/10.1007/s00146-021-01295-w

For a full set of references click here